Data observability is the process of monitoring the situation and state of your data. This can help you improve your confidence in making data-driven decisions. Every organization depends on quality data for its daily operations. This is particularly true for data scientists and analysts who use this data to generate insights and analytics. If this data is not available or is incomplete, it can result in a breakdown in business processes.

Data Observability is a collection of activities and technologies that help you understand the health and the state of your data

Data observability refers to a set of activities and technologies that help you understand the quality, state, and health of your data in real time. It helps you detect and correct problems as they occur, and can improve the health and efficiency of your organization. Observability is especially useful in large organizations, where data landscapes are extremely complex and extensive.

Data observability can help you pinpoint problems within your machine learning models and helps you improve their accuracy and quality. It can also help you identify the root causes of problems, resulting in better decision-making. In the context of software development, Data Observability is a set of activities and technologies that helps you understand the state and health of your data.

Data observability can be used to improve software testing and development processes. It can help organizations identify and resolve performance issues and automate CI/CD processes. It can also be used to improve conversion optimization and ensure software releases are meeting external SLAs. Observability can also help you reduce the time you spend debugging in Production environments.

It provides end-to-end visibility into your data pipelines

Data observability is the ability to monitor and understand the health of your data pipelines from beginning to end. The benefits of observability go far beyond simply tracking data changes. It also provides a holistic view of system performance, enabling teams to find the root cause of downtime and resolve problems quickly.

Data observability is a key component of the DataOps process. It helps you monitor and measure the health of data, including the number of errors or downtime in pipeline processes. This can help you determine which data is clean and whether it’s contaminated with errors. The goal of observability is to eliminate these issues and ensure your data pipeline is always running at peak performance.

Data observability helps you eliminate downtime by monitoring your data from multiple sources in real time. Observability also helps you automate the monitoring, alteration, and triaging of enterprise data. FirstEigen, for example, offers an autonomous data observability solution that is integrated with a comprehensive data quality solution. Observability can also automate the replication of data from various data sources. The software comes with a range of connectors and is free to download and use.

It helps prevent data outages

Data Observability is an important technology that enables organizations to diagnose the health of their data value chain. Without data observability, companies could incur unanticipated costs and lose customers. With data observability, organizations can avoid such costly situations and maximize the use of existing data resources.

Data observability is a crucial component of DataOps. It helps organizations to ensure the quality of their data, preventing downtime, and minimizing errors. Using data observability ensures that data is up-to-date and distributed to the correct silos. Moreover, it makes it possible to trace the lineage of data and identify any errors.

Data observability helps organizations understand complex data scenarios and prevent data outages. With the use of automated rules, data observability can identify issues before they have an impact on the organisation. This type of technology can also help organizations determine the root cause of an issue, which can help them solve the problem. In addition, data observability helps organizations increase the level of trust in their data, thereby contributing to timely delivery of quality data.

It supports DataOps

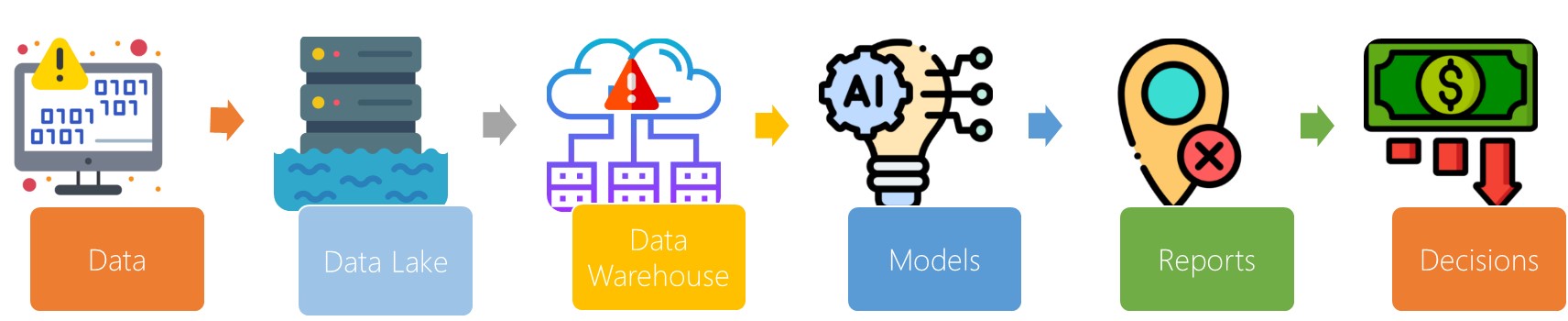

Data observability is crucial in the data pipeline, which ingests data from many sources, transforms it, enriches it, and makes it available for analytics, operations, and storage. Data observability allows data pipeline managers to maintain continuous visibility of all the stages in the pipeline, which minimizes the impact of any data issue on downstream applications. Downtime costs organizations $140K to $540K an hour, and Data observability helps prevent downtime by ensuring that data pipelines are running smoothly.

Data observability is defined as a set of activities aimed at monitoring the health and consistency of data. As companies become more reliant on data for decision-making and everyday operations, it is essential to ensure that it flows at the right pace and quality. This can be achieved through a data pipeline, which serves as a central highway for data and allows organizations to monitor and respond to changing data quality.

As data volumes increase, the need for data observability will become crucial for organizations of all sizes. High quality data is crucial to data-driven decision-making, and manually managing and monitoring data poses a significant risk to organizational health and decision-making processes. As a result, data observability will become a norm in data management, reducing data silos and facilitating collaboration among organizations.